Do It Yourself NoSql

22 May 2014In this post I will explore the possibility to write CRUD web applications without using a relational or nosql database - have the data tier collapse into the application. I'll show that within certain constraints it is a fast and fun thing to do, very similar to building a prototype, but one that exhibits the characteristics of production-quality software. The resulting architecture is somewhat similar to Redis, except that it would be a (tiny) library instead of a separate server.

The basic idea is simple: have a single process single threaded application. Use a high level language supporting green threads with async I/O (cooperative multitasking within the application) to make it responsive and appear doing multiple things at once, but without the difficulties of real multithreaded programming (no locks or mutexes necessary). Implement simple on-disk persistence.

Let's see what all this means and how the various pieces of this puzzle fall into places. There are certain assumptions that seem disturbing but can be relaxed with a bit more software infrastructure - notably that the data must fit into memory when represented in the language's natural data structures. I will use Python, although other stacks can be used with similar effect, notably node.js.

People use databases for query/indexing functionality, persistence and easy concurrent code. Let's look how we would handle all these.

Data model, indexing and queries

As we'll keep everything in memory, we'll just use the language facilities to organize data, modify and query them. This could be a big topic, but I won't go into much details here. A good way to start would be a structure of nested lists and dicts/hashes that starts from a root variable and can be traversed using normal language syntax. Queries are easily expressed in Python with list comprehensions. Here is an example structure for a web app with registered users, containing mostly login info:

root = {

"[email protected]": {

"email": "[email protected]",

"salt": "...",

"passhash": "...",

"data" : {},

"options" : {

"newsletter": True

}

},

"[email protected]": {

...

}

}

At login attempts you would look up the user's email address directly in the root, and generally you should structure your data in such a way that you don't need to iterate in order to find a piece of data. The data structures available in the standard library may not be enough, for example you might need to use something like blist for ordered collections with fast lookup. You might also want to use dedicated classes instead of generic dicts for your objects (using them with _slots_ would also reduce memory usage).

A query showing the percentage of users who opted in for your newsletter would look like:

sum(u['options']['newsletter'] for u in root.values()) / float(len(root))

Easy concurrent code - Atomic, Isolated and Consistent

Typical web applications are written so that the only shared state resides in the database. It takes care of concurrent access to the shared state - which is what the first three letters from ACID are about. We will keep all data in memory and use event driven architecture (single process, single thread) instead.

In Python I do it with gevent. Gevent lets you write code that does asynchronous network I/O. Node.js is similar, but it uses callbacks while gevent uses coroutines. Many people don't realize that the code they write with gevent or node.js has very different concurrency properties than the code that uses normal threads. When you use normal threads, you are exposed to race conditions everywhere in your code except where you use explicit concurrency primitives to avoid it. When you use gevent or node.js, there is a single process, so a single address space holding all of your data, and a single thread modifying them. Whenever you do I/O, the context switches to a different green thread (gevent) or different callback (node.js), creating the illusion of parallel execution. But now we can have race conditions only on the I/O boundaries, where execution switches. As long as we don't have I/O, the code executes as an atomic unit and no one interrupts it.

An example: counter

To make this concrete, consider the example of a global counter implemented with the bottle framework in Python:

#!/usr/bin/python

import gevent

from gevent import monkey;monkey.patch_all()

# hold all global state here

root=dict(cnt=0)

from bottle import route, run

@route('/')

def inc():

n = root['cnt']

# gevent.sleep is a yield statement, it switches to a different greenlet

# network I/O would have the same effect

# uncommenting it will result in incorrect count

#gevent.sleep()

root['cnt'] = n+1

return "Hello World #%i " % n

run(server='gevent', host='localhost', port=8080, debug=False)

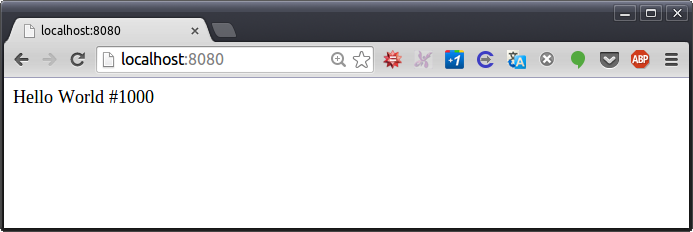

Implemented in native OS threads without synchronization this would result in a race condition, and the counter will be less than the number of requests. With gevent, when you run the code as presented, you get:

nik@occam:~$ ab -n 1000 -c 10 http://localhost:8080/

[...]

Requests per second: 2555.79 [#/sec] (mean)

Then you can see there is no race condition and the counter is exact. Note that it is also fast, as data are in memory, and there is no locking nor interprocess communication.

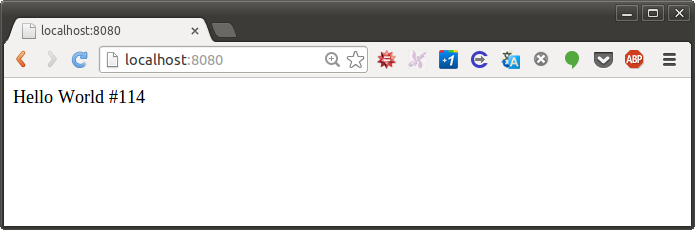

The commented gevent.sleep(0) line does a "yield", meaning that the current greenlet pauses and lets other greenlets run (which also happens when you do I/O). For our purposes this is the same as preemption by another thread at this point. If you uncomment it and re-run the test, sure enough, the counter would no longer be correct:

This basically means that as long as we are conscious about the I/O in our code, we can write multitasking code and reason about it fairly easily. In fact, a CRUD application that doesn't call external services, would have each HTTP request executed in one piece, with no internal I/O boundaries.

With node.js the I/O boundaries are clearly delineated by callbacks. Everything within a function executes atomically. In gevent the I/O boundaries that matter are just network I/O (disk I/O is blocking in gevent, so it doesn't cause switching to a different greenlet).

ACI

Let's ensure we cover the first 3 of the ACID database properties for code between two I/Os:

Atomicity - all code executes or nothing does. In fact, the system may crash in the middle of our code executing, but this cannot be observed from the client (web browser). If we call external services from our code, they could observe non-atomic behavior.

Consistency - we only transition between valid states. As long as the system is in valid state at the I/O calls, this property would hold. For a simple CRUD with no external services this means the programmer must make sure it is in valid state at the end of each HTTP request, which should be the case anyway. If our application only reads data from an external service, it should do so in transient variables before starting to modify its state.

Isolation - the transactions appear to be executed serially, because they are in fact executed serially.

Before I handle durability in the next section, I want to point out that a nice use case for the technique as presented so far would be implementing a multiplayer poker game - there is plenty of shared server side state, it's all transient (for the duration of the game), but concurrent updates to the state become easy. It is important not to do too much computation in one chunk as this would increase latency in handling other requests that have come during this time.

Durability

Real durability would make sure application state is fully persisted on disk before returning from an HTTP handler. We'll do this in a later post, and for now I'll show how to persist everything every minute or so without breaking the nice properties we have established already. We can call this eventual durability by analogy with eventual consistency :)

Let's dump a JSON image of the state on disk. In order to produce consistent image, we need to serialize it at once, with no I/O boundary in the middle, and this is the slowest part of the process. Actual writing of the file is an I/O boundary in node.js and synchronous operation with gevent. Here is a simple example that saves the state every minute (you can add this to the counting example above):

import tempfile, json, os

def saveSnapshot(root):

def doSave():

with tempfile.NamedTemporaryFile(delete=False) as fw:

tempname = fw.name

json.dump(root, fw)

# had to add the following 2 lines to avoid data loss

fw.flush() # flush any userspace buffers

# fsync would block the process

# so run os.fsync(fw.fileno()) in a thread pool

gevent.get_hub().threadpool.apply(os.fsync, (fw.fileno(),))

# note os.fsync is native and doesn't engage GIL,

# allowing other code to run

os.rename(tempname, 'snapshot.json')

try:

while True:

gevent.sleep(60)

doSave()

except gevent.GreenletExit:

doSave()

gevent.spawn(saveSnapshot, root)

Note that it doesn't fsync (guarantee it's written to disk when the code completes). The write system call returns as soon as the data are in OS's buffers, so it would be fast. The precise moment the data hit the disk doesn't matter because the previous snapshot would be available. Data integrity should also be OK because the file system is supposed to take care and do the rename only after it has fully written the file. Nevertheless I'll provide an fsyncing implementation in a follow up.

Turned out the OS (at least Ubuntu 12.04 with ext4) doesn't guarantee renaming would happen after the file is written to disk. In fact, it would happily rename the file before committing new snapshot to disk, thus losing the old snapshot. Power loss in this moment leaves you with truncated, unusable snapshot. So I fsync the file here, in gevent's default threadpool so that I don't block the main thread from serving requests while it is fsyncing. I don't fsync the rename operation, but it's guaranteed rename will happen after writing the snapshot to disk.

Scalability bottlenecks

Here are the bottlenecks you are likely to encounter as you scale the application. I'll provide guidelines and fixes for them in follow up posts:

- Snapshot becomes too big to serialize at once

- Snapshot becomes too big to fsync to disk at once

- State doesn't fit in RAM

- A single core can't handle all processing

An initial implementation using this architecture should be about as expensive as a throwaway prototype, so don't be afraid to start with it. When you need scale you can rewrite it using anything else with the benefit that you will know more about both your users' problem and how its technical solution should be implemented.

Redis comparison

Redis is built using very similar principles internally: it is single threaded so no complex locking or synchronization is necessary. We have also implemented one of the Redis persistence mechanisms: RDB snapshotting. Redis does the snapshotting somewhat differently: it forks the database process and the parent continues serving requests while the child serializes the data. The memory after fork() is separate, so the new request won't affect the snapshot in progress. We could do this in Python too (node.js lacks real fork) and avoid blocking the http server while serializing. However, this mechanism works better for Redis than it would in a high level language library. This is because Linux implements the logical separation of memory after fork() using copy-on-write, so the memory pages are actually shared between the parent and the child until the parent (that continues serving database requests) modifies some of them (at which point the OS produces a separate copy of the modified pages).

This works well in C, but in Python the OS would actually copy the memory of the parent process immediately. This costs CPU and uses twice the memory on snapshotting. Why would the memory split? Because of the automatic memory management in Python, which uses reference counters and stores them with the memory objects. While you traverse the object graph in order to serialize it, you would modify these reference counters and the OS would copy all the memory. This behavior is not unique to Python, the automatic memory management of other high level languages like Ruby interferes with the virtual memory copy-on-write mechanism in a similar way.

What is the difference between writing a Python app using DIY NoSql and a Python app using a Redis back-end? With Redis the data have to cross inter-process boundary to move between the application process and the database process. In fact, they often have to do it multiple times per http request with latencies and CPU usage to serialize/deserialize data adding up. That's why Redis got Lua scripting - to be able to do application logic close to the data. Actually Redis with scripting is similar to the DIY NoSql architecture, except that now you can use languages other than Lua for scripting and the data storage mechanisms are embedded in the language vs the language being embedded in the data storage mechanisms.

Use cases

There are a couple of public apps running on this architecture.

t1mr.com

t1mr.com is a service that pings your site every minute to verify it is running (and might try to restart it if it's down and your hosting provider has the API). I wanted the basic functionality to be free so it had to be very efficient. It cycles all the sites every minute, so an in-memory database is a good fit for it. It is network bound and can monitor tens of thousands of sites using a single core.

fleetnavi.com

fleetnavi.com is a service that optimizes delivery routes. It solves a vehicle routing problem (a generalization of traveling salesman problem with multiple vehicles, time, capacity and other constraints) using Google Maps data. There are no particular performance constraints requiring the DIY NoSql, but it was the simplest and most flexible option. The optimization solvers run in external processes, so they don't block the web server.